Optimizing User Experience Via A/B & Multivariate Testing

Here’s how most digital features are managed:

- A stakeholder (or product manager) has an idea.

- A team of user experience experts design an experience.

- The stakeholder (or product manager) provides feedback.

- A final version is designed, implemented and released.

- The team moves on to the next big thing.

The problem with this approach, from a user experience optimization point of view, is that everything in the digital space can be done in more than one way.

However, the vast majority of companies out there in the world only select one approach. They don’t mean to do this, but most digital features released to production are the result of “my way or the highway” thinking.

What if I told you, right here, that this approach – the most common way of building digital experiences – is causing companies to lose a lot of money? Or if I told you that it may devalue a company’s return on investment, its value to the end user, and may even cost a company a drop in customer satisfaction?

Sometimes, jumping forward towards building the next Big Thing is not the right call.

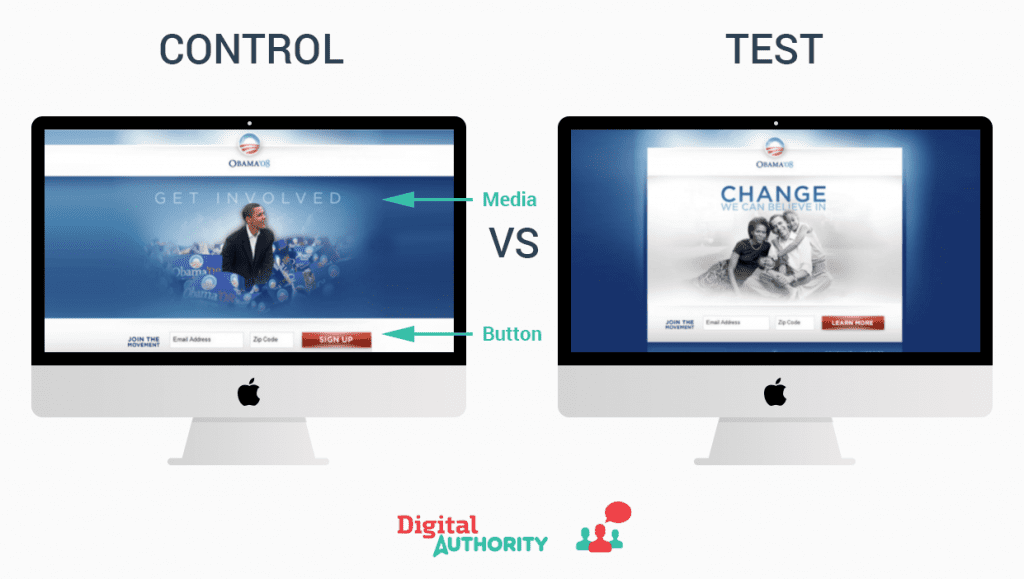

Sometimes it is better if you choose to optimize your site or app experience before adding new features and functionalities. For example, Obama’s re-election campaign in 2012 saw a staggering 49% increase in donations by running a simple test comparing two different user interfaces. They didn’t try to reinvent the wheel. Instead, they optimized the current experience and saw a significant increase in revenue through the online channel.

Before we go on, a simple definition:

A/B and multivariate testing are analyses and optimization methods that help businesses make better decisions and increase revenue by testing multiple versions of a digital asset to see which version performs best.

A/B and multivariate testing is the best approach to user experience optimization, leading to an increase in sales and user engagement.

In this ultimate guide to A/B and multivariate testing we will:

- Explain what A/B and multivariate testing are

- Explore A/B testing benefits

- Cover how companies can run effective testing

- Show you how to set up a test

- Discuss best practices for A/B testing and multivariate testing

A/B and Multivariate Testing

1. What Is A/B Testing?

A/B testing allows you to test multiple versions of a user experience so you can determine which is the most effective at achieving a specific business goal (e.g., conversion, sales, engagement etc.) using actual users on your website or app.

The idea is to reveal multiple variations of a user experience to your actual users and then measure the results to see which version performed best. It’s a way to quantitatively decide which experience your users prefer.

What sets an A/B testing apart from other types of analysis (e.g.: measuring before and after a change) is that it can tell you whether the result is statistically significant, so you can be confident you are making the right decision. The A/B and multivariate testing tool you chose will tell you how confident you should be that there was a significant impact made by the change so that you can understand whether the results are more directional in nature and whether you can rely on them with near certainty. If the A/B and multivariate testing tool you chose doesn’t have this feature built in, you can use this handy calculator.

2. What Is Multivariate Testing?

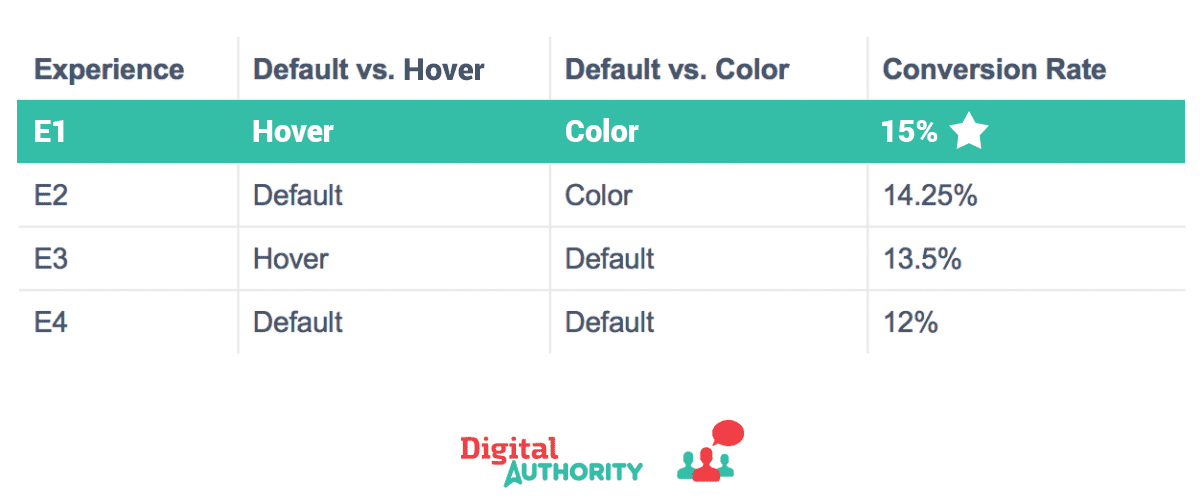

Your digital presence is made up of many elements you can adjust concurrently to improve the user experience. User testing best practices require changing many elements at once in order to determine the best combination.

Multivariate testing allows you to evaluate multiple landing page test ideas simultaneously. Through multivariate testing you will determine which combinations of changes lead to the best overall performance.

Let’s say you want to test the combination of a hover state on a button and a color for your “Add to Cart” call-to-action. Your goal is to understand what combination leads to the highest conversion rate. You can easily setup a multivariate test to try each of these options with your customers to determine which performs best.

To get you started here are some multivariate testing examples:

One of our clients wanted to test various combinations of changes to its header, including: the size and location of the search bar, a ‘contact us’ button, and an updated header style. After all variations were tested, we found that a large search bar with a prominent ‘contact us’ button and the new header design led to the best results. This type of analysis is only possible with Multivariate testing.

A real world example of a company doing extensive multivariate tests is Facebook. Over the span of just a few days we saw Facebook testing the location of the notifications, feed, and messages in various placements. With these tests, Facebook can optimize the location of each button to optimize the user experience on its site.

Multivariate testing allows you to simultaneously test many changes across your user experience in order to best understand the optimum combination of changes.

A/B and multivariate testing is the key to optimize the design as a means to achieve your business goals. A/B testing can be used for the simple testing of a few variations. Multivariate testing should be done when you have multiple different combinations of elements to test.

Why You Should Test

1. Improve your digital return on investment

User experience optimization is an efficient way to improve your bottom line. A/B and multivariate testing helps you squeeze every bit of value from your site or application.

A major B2B services company —one of our clients — saw a 300% increase in quotes by changing their call-to-action (CTA) position / UX.

Obama’s campaign for reelection in 2012 saw a 49% increase in donations leading to 2.8 million incremental e-mail addresses from one of their tests.

Another example is when Bing ran a test in which it slightly changed title colors, leading to an increase of over $10 million in revenue, according to Harvard Business Review.

2. Understand your customers better

Powerful A/B testing provides more information about your customers than you may realize. It enables your ability to segment your customers on multiple levels in order to gain a deeper understanding of their behaviors.

You should be considering how to segment your customers, because not every user wants to interact with your site in the exact same way. Market segmentation allows companies to target and personalize to different customers.

You can look at the customers who performed best in your test as a cohort. Analyzing the best performing customers cohort, you can learn more about what drives them to be the best customers.

Here is an example of this: when Twitter discovered, through testing, that their most loyal users were those who followed at least 9 other people right after signing up. Based on this finding, Twitter required new users to follow people upon signing up.

Schedule Your Free Consultation

Looking To Meet Now? Schedule A Meeting Today

3. Confidently implement the right user experience

There are times where the cost of making a mistake is high. In these cases, it is critical to test before the feature goes live to all users.

A/B and multivariate testing empowers you to make confident choices knowing they are supported by statistically significant sound data. Once the test is complete, you will be able to say with confidence which experience leads to the best results.

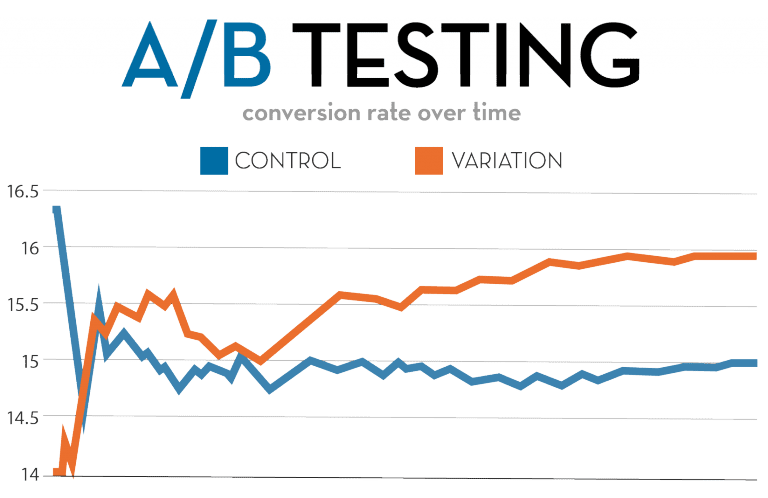

In the example below, you can see there is a significant difference in performance between the two elements being tested. This is clear by looking at the big gap in the two lines measuring conversion across the two experiences.

You can confidently predict the outcome of pushing your test idea to 100% of your users. Using statistics built into the A/B testing application, you will be shown when the result becomes statistically significant. At this point you can confidently make that change the standard for all your users.

4. Solve disputes

If I had a nickel for every time I had a disagreement with another product manager, designer, UXA, business analyst, my boss, and the janitor downstairs about what is best from a user experience perspective – I’d be a rich man.

In digital strategy it is natural, even EXPECTED, that disagreements will arise. Why? Because digital strategy and its execution is as much a science as it is an art. Virtually everything a team member may be working in digital strategy will be subject to some level of creativity. When creativity is present, so is conflict. Every product team has disputes about the right way to do something. Should the button be red? Should we put multiple calls-to-action on the page? What if the button were above the fold?

The beauty of A/B testing is that all these disputes can be objectively settled . According to Think Creative Group, A/B testing “settles disputes among the members of your creative team regarding which website view will work best for visitors.”

If different people have different views on a certain functionality, why not build both and let your end users decide which is better for them?

A/B testing, by its very nature, is objective. It takes subjectivity and opinion out of the equation, thus providing clear information and eliminating the need for a debate.

How Does A/B and Multivariate Testing Work?

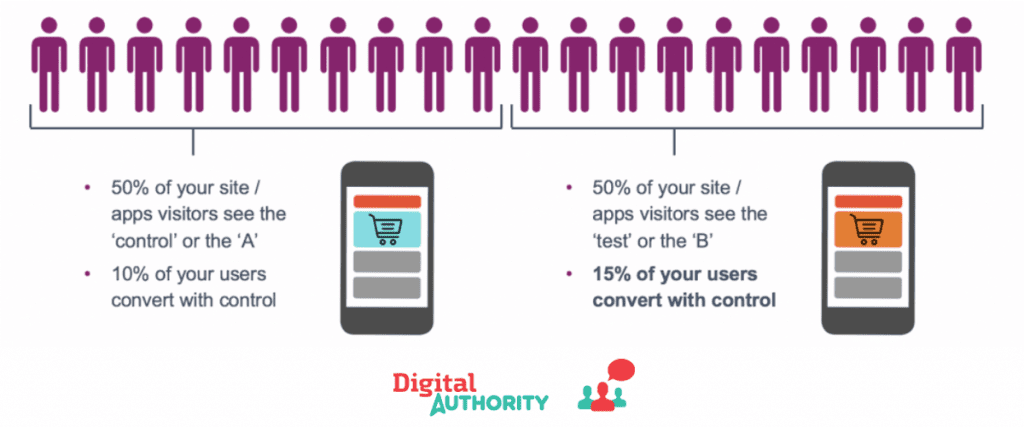

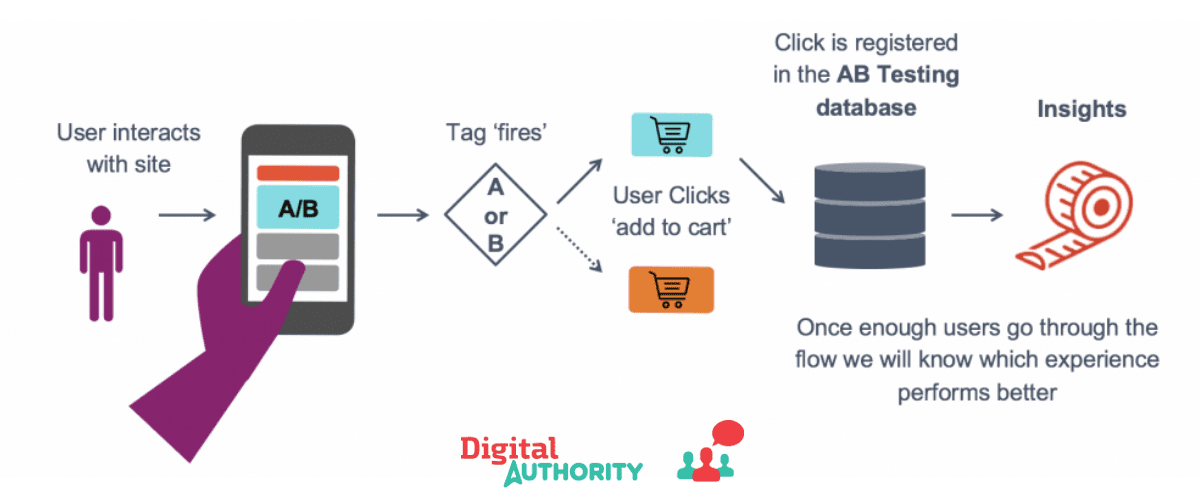

A/B and multivariate testing work by splitting user traffic among different versions of the user experience. This means that different users of your site will see different versions of your site. The testing tool then measures the impact of each version of the user experience to understand which performs best.

To understand it more technically, here is the basic process.

- A user goes to your site. This causes your A/B testing tag to fire, and pushes the user into one of the multiple variants that you created.

- The user then interacts with the functionality you are trying to test.

- If the user completes the expected task, the A/B testing software records that. If they don’t complete the expected task, that is also recorded.

- You can then view the results in your A/B testing tool (ex.: Optimizely, Maxymiser, VWO, Unbounce, Google Optimize) to chose the winning variant.

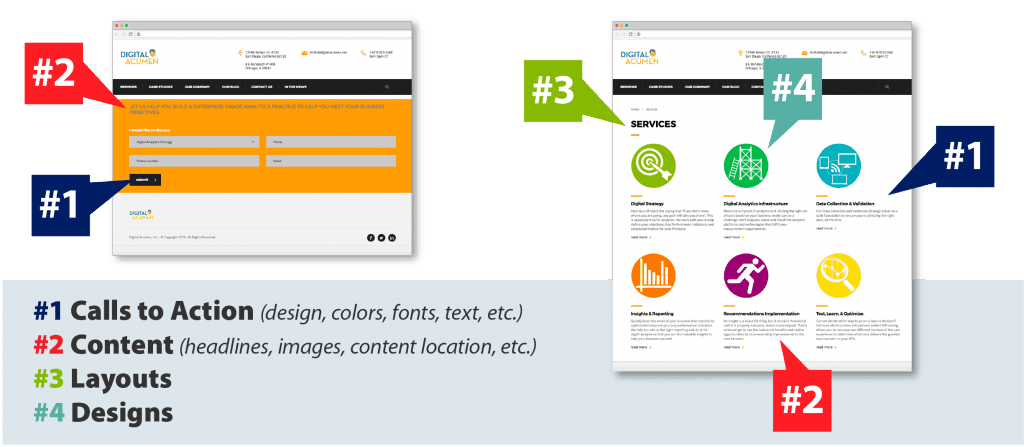

What Types of Things Can We Test?

One of the first steps is to determine what to test.

There are many types of things we can A/B test, including: calls-to-action, content, layouts, designs, promotions, etc. Each has challenges and benefits that should be considered when creating your roadmap of tests.

We will examine types of tests that increase conversion, and engagement.

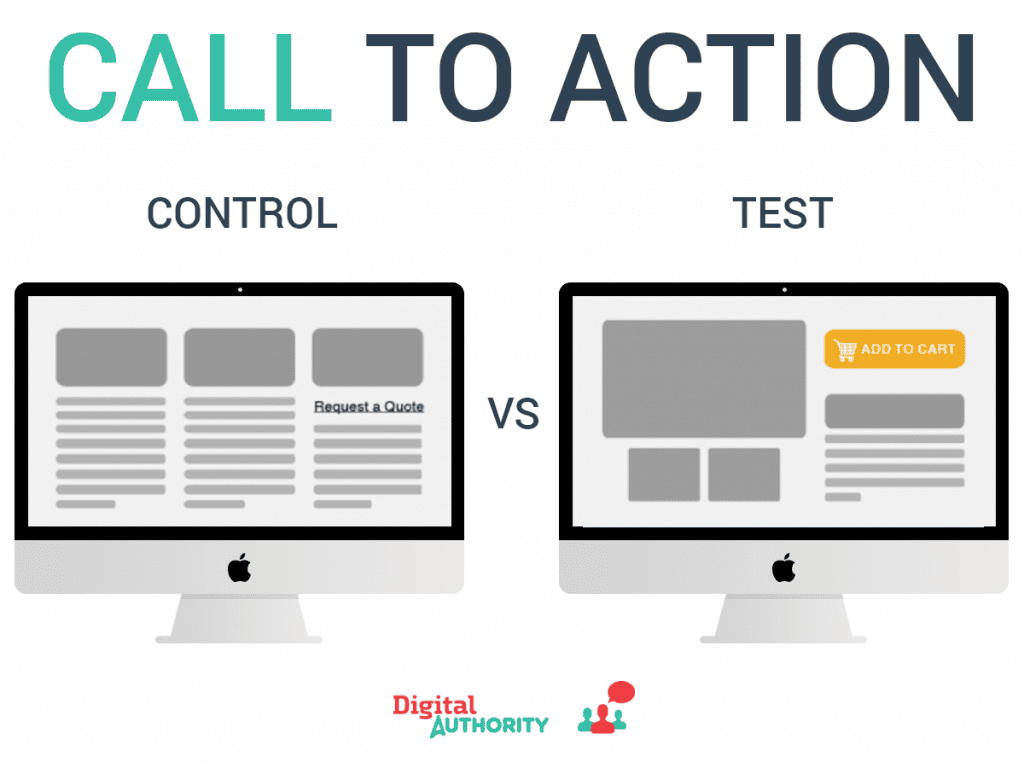

1. Test your calls-to-action (CTAs) to improve conversion

Call-to-action tests are an excellent and quick way to see improvements to your conversion rate. There are no hard fast rules as to what calls to action perform best.

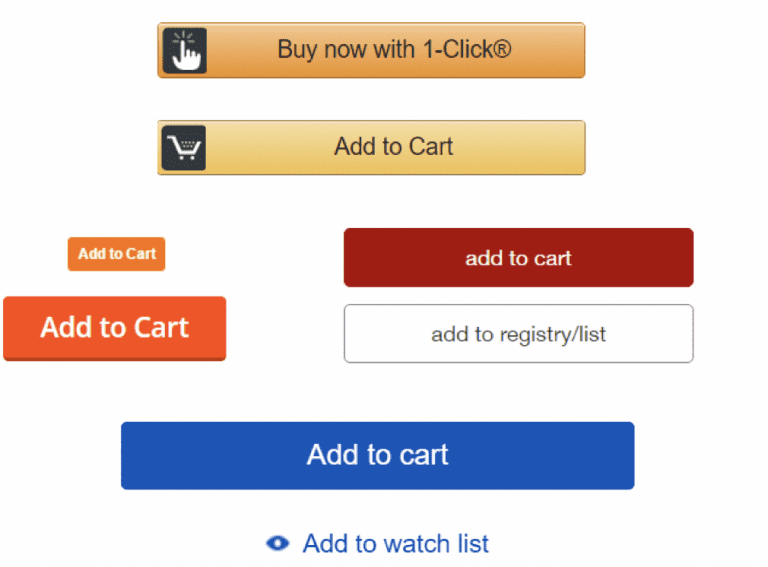

Just look at the large variety of calls to action below: rectangles, links, orange, red, yellow, large, small. Each of these could perform well for the site on which they are being tested.

On average, marketers who test their calls-to-action can expect to see a 25% increase in conversion according to business 2 community.

As mentioned above, one of our clients saw a 300% increase in quotes simply by changing the position/copy of their Request a Quote CTA. Think about it – just moving the call-to-action on your page from point A to point B can result in a huge increase in revenue.

If you have not yet tried multiple variants of your CTAs, you would be wise to quickly consider this option because the tests are easy to do and can generate substantial revenue.

Examine just the calls to action below for the same concept from different companies (yup, Amazon is the first one). Just look at all the different things that could be tested and optimized for this specific CTA: colors, sizes, locations, buttons vs. links, number of calls to action (CTAs) on the page, primary and secondary CTAs.

A simple change like the one seen above could result in a huge improvement to your bottomline.

2. Test different layouts to optimize the design

Did you know that changing page layouts can increase conversion?

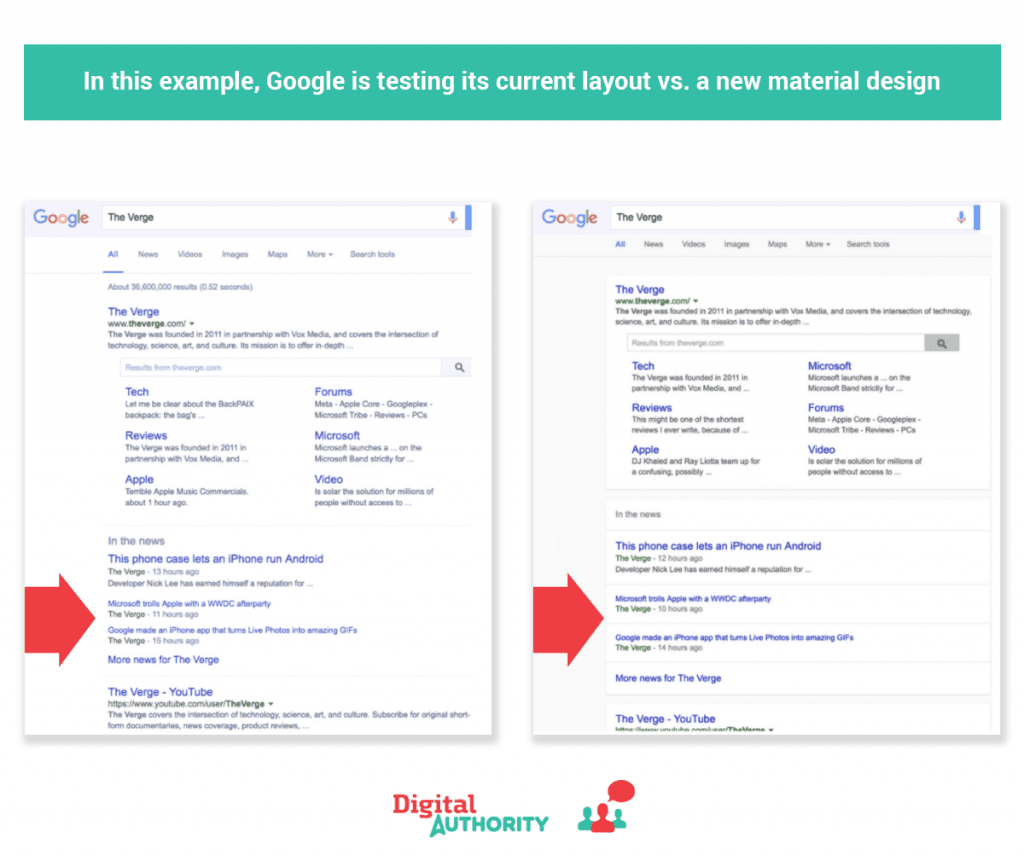

Google is testing a new layout using material design vs. its own design to determine which leads to higher engagement.

By layout changes I mean rearranging content/modules within an existing page. Note I said rearrange – NOT redesign – the page. This exercise only involves re-ordering / re-positioning items on the page.

Now – you might ask yourself: why on earth would I simply rearrange some elements on a page?

Suppose, for example, you see that some content positioned lower on your current page gets more clicks than items higher on the page. This happens more often than you might think, especially with complex websites that have many elements on any given page!

If that happens to your site, consider moving sections/sub-sections from the bottom of a page to the top, or moving a module from the right side of a page to the left.

3. Test your promotions

Test your promotions across your digital experience to increase engagement/conversion. You will be optimizing for revenue/margin from the campaign.

Examples: you can test things such as layout, size, design, colors, copy, and calls to action.

It makes sense to run this type of test if: you are running a multitude of promotions which lead to a substantial portion of your revenue.

Promotions can look wildly different across different sites and when used for different purposes. In many cases this is justified by running significant tests to determine which promotion style works best. However, there are some best practices you can start with. For example, below you can see that most have a call-to-action, are bright, and don’t have too much text.

Every promotion can be optimized for the particular site, experience, time, location, user type, etc. to get the best results for the company.

4. Test your content

You can test versions of your content to see which resonates best with your customers.

It makes sense to run this type of test if: you have seen analytics data suggesting that different types of content that you’ve written are better or worse. Unfortunately, you’ve never been able to have the content on the same page at the same time. So, you want to find out which performs best.

Examples: test tone, length of content, different titles, and images. We worked with a client to test various versions of their site navigation copy. In particular, we tested “Insights” compared with “Infocenter”. On the Digital Authority site we have also tested “Case Studies” versus “Work” to see which section title would attract more visitors.

Almost 90% of B2B companies are actively engaged in content marketing, making this a ripe area for optimization.

Which Tests Are The Most Successful?

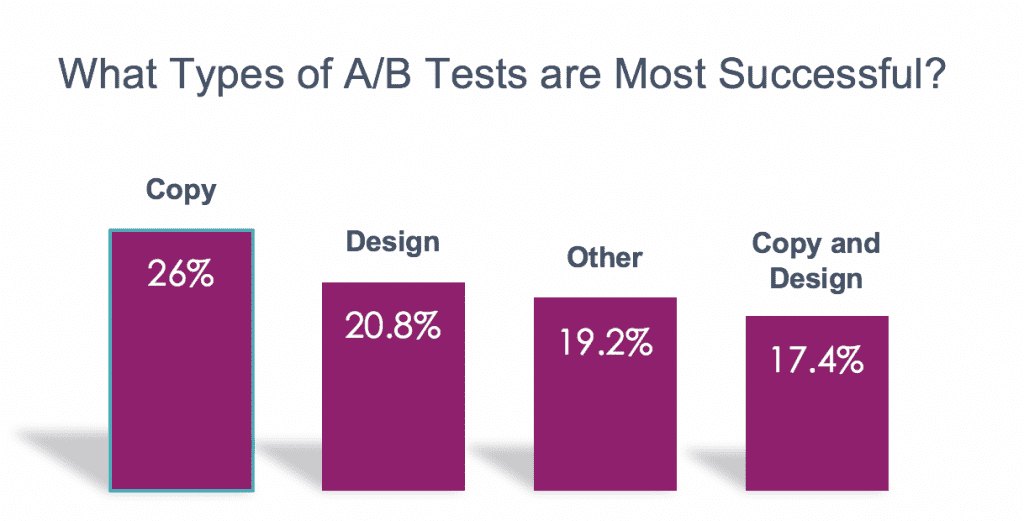

On average, different types of tests have different degrees of success in user experience optimization. Copy tests have the highest success while design/copy leads to the least success. When prioritizing which tests to do first you should consider this.

The great part about starting with copy changes is that they are easy to make and lead to excellent results.

Schedule Your Free Consultation

Looking To Meet Now? Schedule A Meeting Today

A Step by Step Guide To Setup Your First Test

Now that I’ve told you what A/B split testing is, how it works, and which tests are most successful, let me show you how to run your first test.

1. Decide on an A/B and multivariate testing vendor

Deciding onc

As with any vendor evaluation, you should follow these steps: write business requirements, define the set of A/B testing vendors to which you will send the request for proposals, send the request for proposal to the vendors, score their responses, se-tup demos with the top few, update scoring, choose the vendor, and sign on the new vendor.

You will know you have selected the right vendor if it meets or exceeds your requirements. Here are specific requirements your A/B testing vendor should meet:

- Support for testing on responsive desktop sites and mobile applications

- Graphical user interface for business users in order to easily create tests without requiring any development coding

- Integrations with your analytics suite (ex.: Google Analytics, Amplitude)

- Ability to run many A/B and multivariate tests simultaneously

- See cohorts of your most profitable segments of users

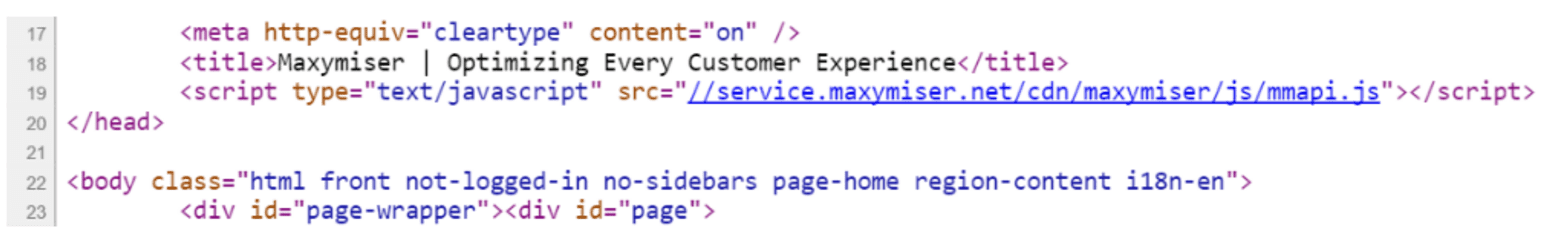

2. Deploy the A/B testing code on each of your pages.

Deploying the A/B testing framework is super simple for your development team to do. They just need to add a few lines of code to each page on your site/application on which you want to be able to do tests.

Example code:

Best practices:

- If you use a tag manager, be sure the tag is set to synchronous; you want this tag to fire and immediately give the user the right experience.

- In many cases, there are plugins available to make this process easier.

- Be sure to place the tag in the correct part of the page, usually right before the closing head tag.

3. Testing process

![]()

Define the test hypothesis

- Use user testing, analytics tool data, stakeholders, customers, etc. to determine what you should test. These may give you an idea of what you should change and what its impact will be.

- Formally define your test subject and expected outcome.

- Build a testable hypothesis. This is your guess about the effect a single change will have on your site.

For example, a test hypothesis might be: “Changing the color of the “buy” button from blue to red will cause a 10% increase in sales”.

Draft a test document based on all the items agreed to in step 1

- Create the variant. This either requires you to develop it or possibly build the variant in the A/B testing tools GUI.

- Assuming your site has enough traffic, and if you expect to see a big change between the variants, you can even test 3,4,5 or more variants at once if you would like to do so.

- Before putting the test into production, you must thoroughly test it for accuracy to ensure the A/B test will run smoothly and deliver clear results.

Test and Deploy the test

- You want to ensure that no other changes or A/B tests that might interfere with the test go into production .

- Test that the tracking in both your analytics and A/B testing tools are working correctly

- Check the experience in a pre production environment to be sure it is behaving/looks as expected.

- Push the experience to production (turn on the A/B test).

Preliminary results will arrive almost instantaneously

- Once results have reached statistical significance, one variant will emerge as the better performer.

- Report on the results to stakeholders.

- Assign the highest performing variant as the default. (in the A/B testing tool).

- Develop the variant to be the default (and shut down the A/B test).

- Document the results of the test (so you don’t run the same/similar test again by mistake).

Iterate

- You have now answered the question and used the findings to improve your site’s performance.

- However, there is always room to optimize further.

- It is time to consider the next iteration and the next burning question you would like to answer.

Implementation of Best A/B Testing Practices

1. Manage impact on SEO

- Don’t try to spoof search engines into not seeing your testing. Don’t have your tag automatically send a search engine to a specific user experience. This could cause a reduction in ranking with search engines.

- If your test redirects from a URL to a new URL be sure it is a 302 (non permanent) redirect.

- Be sure to shut off the test eventually and code the new experience. It will improve site performance.

2. User management

- Ensure proper accountability by defining the minimum number of people/roles who can publish to the web because this person has direct access to make UX changes to the site. It is important to ensure that only the right things are tested. Do keep in mind that testing does necessarily mean that real users of your site will see the experience. Thus, it is important to treat new tests with the same rigor as any other feature when going to production.

3. Change history

- Be sure to manage the change history to identify any issues.

4. Define the size of the test pool appropriately

- If the risk is high that the test might not be successful, be careful in sizing the test pool. The test pool dictates how many users will see your test. Maybe 50% is too high. Try 5% until you start seeing preliminary results.

Conclusion

Get the most out of your digital experience by implementing a comprehensive A/B testing strategy for UX optimization. Don’t be like most product teams and shelve a feature/project once it is in production. Own it! Iterate on it.

Testing will help you increase your digital ROI, give you confidence that you are implementing the right user experience, reduce risk, and resolve internal product team disputes.

In this article we have covered every single major aspect of why A/B tests are important for businesses, how to set them up, and learn and optimize the digital experience based on the findings. Specifically we covered:

- A/B and multivariate testing

- The importance of having an A/B testing strategy

- How A/B testing works

- What you can test

- How to implement A/B testing

- The testing process

With A/B and multivariate testing you can bring your digital experience to the next level.

Are you interested in web analytics services? Drop us a note at [email protected] or call us at 312-820-9893

Want To Meet Our Expert Team?

Book a meeting directly here